Can you really run product discovery experiments more effectively with AI?

AI tools promise to accelerate product discovery — but do they? A critical look at where AI actually helps in discovery, and where the bottleneck remains human judgment.

AI tools promise to accelerate product discovery — but do they? A critical look at where AI actually helps in discovery, and where the bottleneck remains human judgment.

Advanced Product Ownership goes beyond backlog management. Dealing with the organizational constraints — fixed scope, big design upfront, governance requirements — that prevent real agility.

When Lean Startup hits the reality of large organizations — why validated learning is harder than it sounds and what Kanban thinking adds to the picture.

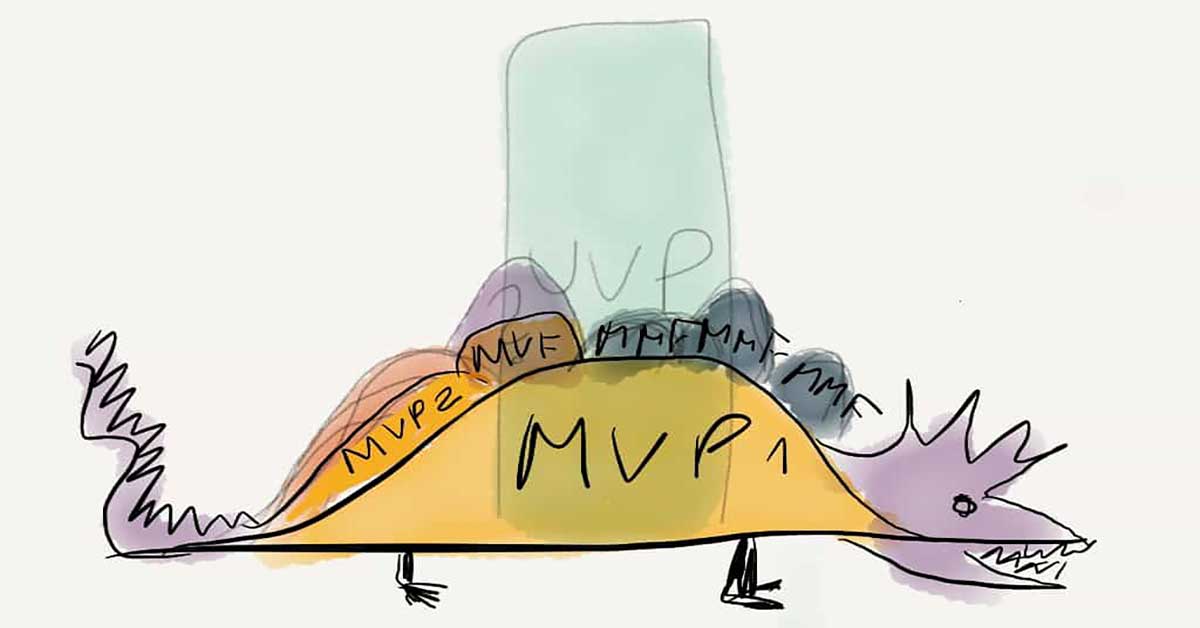

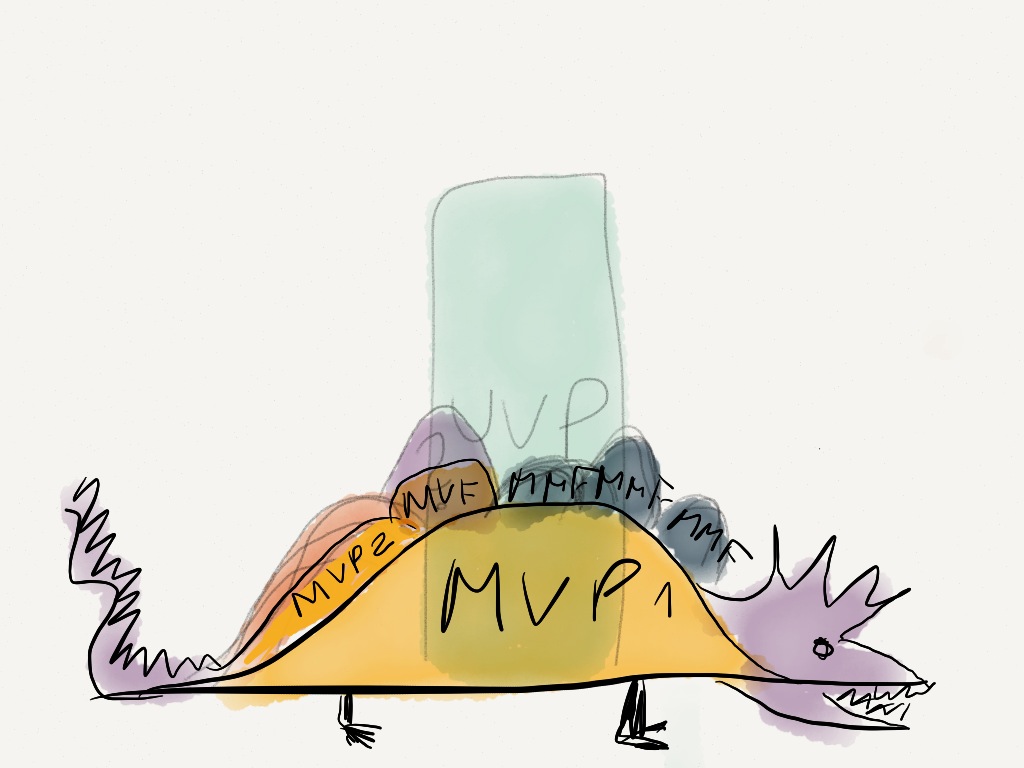

The journey to product-market fit through minimum viable releases — MVP, MVF, and MMF explained for teams transitioning from feature-factory to outcome-focused delivery.

Takeaways from LSSC 2012 Boston — where lean product development flow, Lean Startup, and Kanban converged in a rich mix of practitioners pushing boundaries.

Applying Lean Startup thinking to organizational change: testing change hypotheses with minimum viable interventions before rolling them out broadly.