· Agile

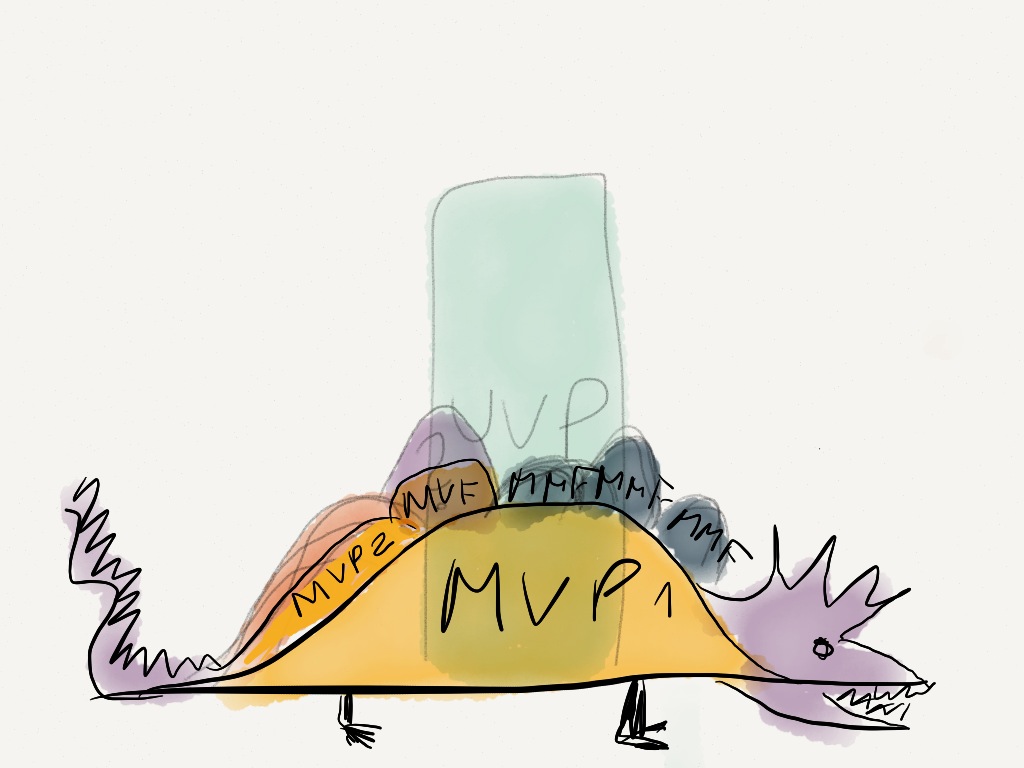

What's the difference between MVP, MMF, and the other Lean/Agile requirement containers?

The journey to product-market fit through minimum viable releases — MVP, MVF, and MMF explained for teams transitioning from feature-factory to outcome-focused delivery.